AI-native customer operations.

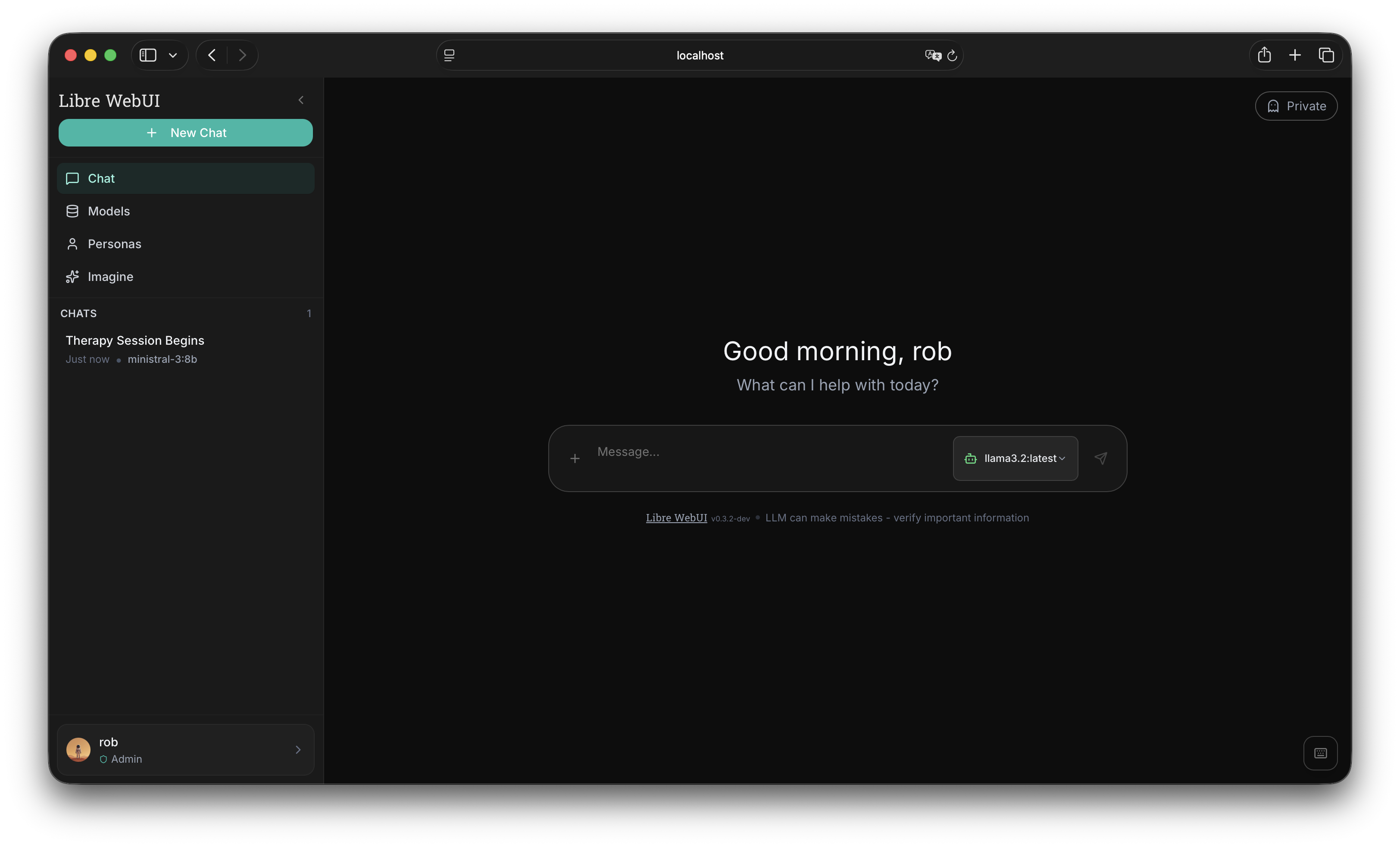

Kroonen AI builds the Libre ecosystem: AI support, local LLM interfaces, terminal-native agent tools, Twilio-powered phone systems, and model research for small teams that refuse bloated SaaS stacks.

From model research to real products

The same lab that trained Genesis also ships practical tools: chat, docs, terminal agents, phone, and customer workflows.

What We Do

AI products, communications systems, and local-first infrastructure — from prototype to production.

Pre-Training from Scratch

Training language models from scratch on consumer GPUs. Custom architecture, tokenizer, and 60B token multilingual dataset - no datacenter required.

Model Fine-Tuning

Custom fine-tuning of open-weight models for your specific use case, brand voice, and domain expertise.

Safety Evaluation

Comprehensive model evaluation aligned with frontier lab methodologies. Red team testing and benchmark analysis.

Applications & Communications

Custom web, mobile, and telephony applications with AI integration. React, TypeScript, Twilio, Cloudflare Workers, and native iOS.

Red Team & Security

Adversarial testing of AI systems and infrastructure. Prompt injection, jailbreak resistance, model extraction, and CBRN safety assessments.

AI Agents & Orchestration

Design and deployment of AI agent systems that work alongside your team. Multi-model orchestration, local deployment, and custom integrations.

Featured Projects

The Libre ecosystem: support chat, local AI, terminal agents, and programmable communications.

Latest from the Blog

Technical deep-dives from the Genesis training run.

Genesis 1B: Run 2 Extended - 80,000 Steps

Run 2 extended to 80,000 steps (~42B tokens). Step 60,000/80,000. ETA April 24, 2026.

Genesis 1B, Run 2: 3x Throughput, Same Hardware

Redesigning Genesis 1B from 20 to 32 layers. Same param count, same GPUs, 3x training throughput.

The Optimizer State Bug: A Silent Failure in DCP Resume

A silent AdamW state bug during Run 1 that produced a false recovery on poisoned weights.

The Genesis Manifesto

Data sovereignty, constitutional alignment, and why the future of AI is local, private, and personality-first.

Fixing FSDP Checkpoint Deadlocks on 2x RTX 4090

How DCP sharded checkpoints and CPU-offload resume fixed deadlocks on consumer GPUs without NVLink.

Mapping the Mind of Qwen 3.5 9B

A sparse autoencoder for mechanistic interpretability: zero dead features, 16,384 dimensions.

Build with the Libre stack

Tell us where Libre Claw, Libre Phone, local LLM infrastructure, or custom AI agents fit into your workflow. We'll get back to you within 24 hours.

Or reach out directly